ChatGPT and Delusions: Why AI’s “Confidence” Can Be So Dangerous

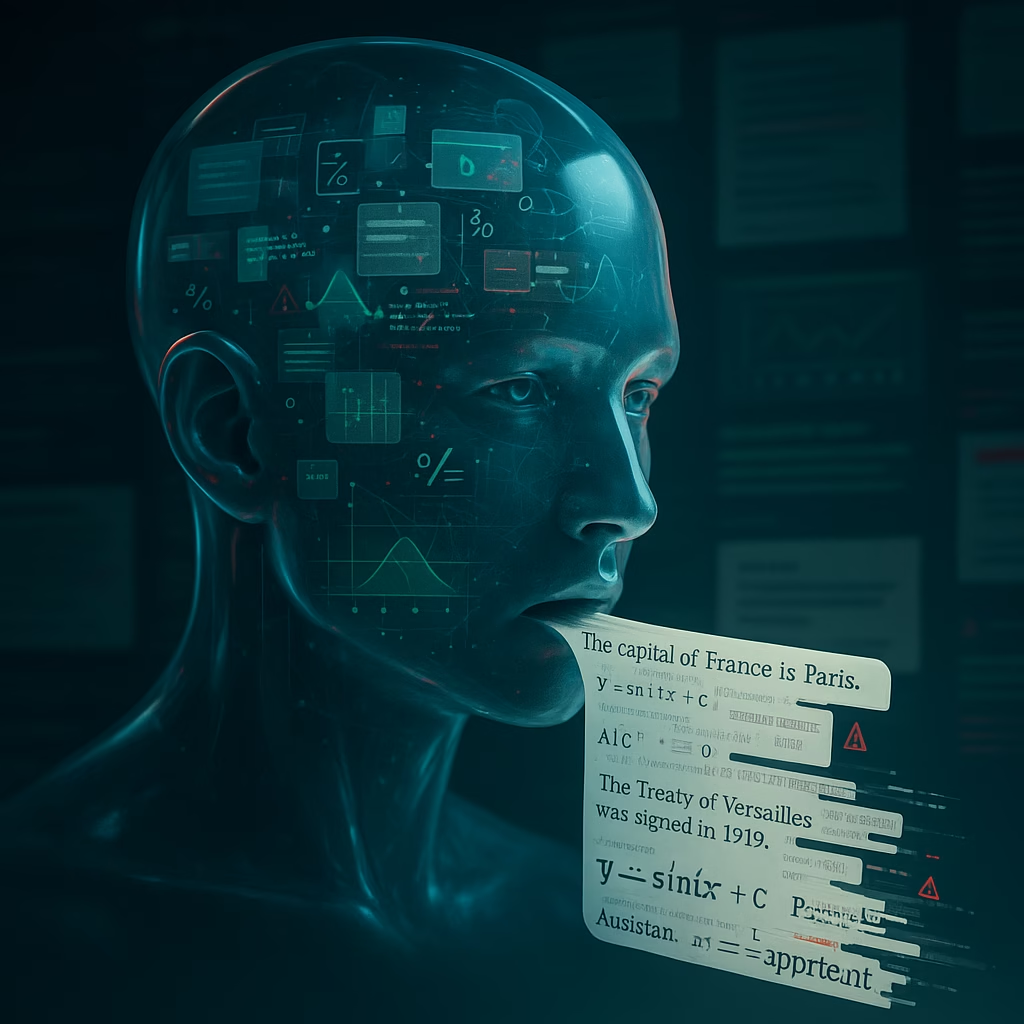

When you talk to a system like ChatGPT, it can feel remarkably human. It writes smoothly, remembers context within a session, and answers almost anything with apparent confidence. But that same confidence hides a serious problem: these systems often make things up—and present those inventions as if they were facts.

Many researchers and practitioners have begun to describe this behavior as a kind of “delusion”. While ChatGPT and similar systems are not conscious, the analogy captures a real and worrying pattern: they can produce falsehoods with unwavering certainty, and they offer almost no internal mechanism for recognizing when they are wrong.

Why Large Language Models “Hallucinate” or “Delude” Themselves

To understand why ChatGPT appears to “delude” itself, you need to understand what a large language model (LLM) actually does. Systems like ChatGPT are not reasoning about the world in a human sense. Instead, they are trained to predict the next token—a word or word fragment—in a sequence of text.

Over time, by training on huge datasets, they learn incredibly subtle statistical patterns about language:

- What words tend to appear together

- What structures sound plausible in a given context

- How to mimic the style of different authors or genres

What they do not do is build a well-structured internal model of reality. There is no grounded representation of actual people, events, or physical facts. So when prompted with a question that requires specific knowledge, the system responds with whatever sequence of words best fits its training patterns—regardless of whether those words are true in the real world.

This is why LLMs:

- Confidently invent sources, quotes, or historical events

- Misattribute academic papers or fabricate references

- Double down when corrected, producing new, equally incorrect “explanations”

From the outside, this can easily resemble a human-like delusion: a fixed belief that does not track real-world evidence.

What Makes AI “Delusions” More Dangerous Than Ordinary Errors?

Computers have always had bugs. But traditional software tends to fail in predictable, diagnosable ways. LLMs, in contrast, generate fluent, compelling, and wrong answers in domains that matter—law, medicine, science, finance—often without any signal that the output is unreliable.

Several features make these AI delusions particularly concerning:

1. Fluency Without Understanding

Because models are optimized for style and coherence, their answers often “sound right.” This leads users to trust them even when they should not. The more natural the language, the more our brains assume there is understanding behind it.

Yet underneath, the system is still only doing pattern matching. It has no sense of what a mistake means, no awareness of harm, and no appreciation for the difference between a plausible guess and a verified fact.

2. No Built-In Reality Check

Humans frequently revise their beliefs when confronted with strong contradictory evidence. LLMs lack that form of self-correction. Once they are trained, they generate output based on fixed parameters. During inference, they are not connected to an external scientific database or a robust factual model by default.

The result is that an AI may:

- State a false claim confidently

- Be corrected by a user

- Then generate a new, different false claim with the same level of confidence

There is no stable, anchored “belief system” to update—just billions of parameters shaping probabilities of text sequences.

3. Authority Without Accountability

As organizations embed LLMs into search engines, productivity tools, customer service, and professional workflows, these systems start to appear as authoritative agents. People may assume they are drawing on rigorous knowledge bases, peer-reviewed science, or official guidelines.

In reality, many answers are interpolated from mixed-quality internet text and may:

- Reinforce misinformation picked up during training

- Blend fictional content with factual details

- Mask uncertainty behind a polished surface

The system never tells you: “I’m guessing and could easily be wrong here.” That lack of transparency is part of what makes these “delusions” dangerous at scale.

Real-World Risks: When AI Delusions Meet High-Stakes Domains

It is one thing for a chatbot to misremember a movie quote; it is another for it to give incorrect instructions about medication or legal rights. As LLMs are integrated into sensitive workflows, their unreliability produces systemic risk.

Legal and Regulatory Risks

There have already been publicized cases of lawyers submitting briefs that relied on AI-generated citations—only to discover that several of those cases never existed. The LLM, asked for supporting precedent, simply invented plausible-sounding case names and summaries.

These so-called hallucinations create downstream issues such as:

- Legal malpractice and liability

- Waste of court resources

- Erosion of trust in digital tools in professional settings

Medical and Health-Related Advice

In healthcare, the stakes are even higher. A false but plausible answer about symptoms, dosages, or interactions could directly endanger patients. Yet LLMs will happily produce such answers unless they are carefully constrained, supervised, and aligned with validated medical knowledge bases.

Even with disclaimers, users may treat the friendly, confident tone as a sign of reliability. There is an inherent tension between making systems more usable and making sure they signal uncertainty when they truly do not know.

Misinformation and Social Trust

On a broader scale, AI-generated delusions contribute to the wider ecosystem of misinformation. Tools that can rapidly produce convincing but unfounded claims about politics, science, or history can undermine public discourse.

Because these systems mimic existing text, they are especially good at amplifying errors that already circulate online. Over time, this can create a feedback loop: generated misinformation gets scraped again as training data, further entrenching incorrect patterns.

Why Calling It “Delusion” Matters

The term “hallucination” is now common in AI discussions, but it has shortcomings. It can sound like a random glitch or isolated visual illusion. “Delusion” emphasizes something different: the systemic, structured nature of the errors, and the unwavering confidence with which they are delivered.

Framing these behaviors as “delusional” forces us to confront a few uncomfortable truths:

- These models do not know when they are wrong.

- They lack a grounded model of the world to check against.

- They can mislead users with consistency, not just by accident.

This framing also underscores why “just making the models bigger” is not a solution. Scaling up prediction power does not automatically grant systems the ability to connect language to a robust, verifiable representation of reality.

How We Can Reduce AI Delusions (But Not Eliminate Them)

There are emerging technical strategies to limit AI delusions, but none is a complete fix. Some of the most active approaches include:

1. Retrieval-Augmented Generation (RAG)

Instead of relying solely on the model’s internal parameters, RAG architectures let the system:

- Retrieve relevant documents from curated databases at query time

- Ground responses in verifiable sources

- Cite where information comes from

This can reduce fabricated details, especially in domains like technical documentation or corporate knowledge bases. However, the model can still misinterpret retrieved text or blend it with invented content.

2. External Fact-Checking and Verification Layers

Another strategy is to build tools that sit on top of LLM outputs and look for contradictions with trusted sources. For example, a secondary system might:

- Check named entities, dates, and statistics against databases

- Flag statements that appear novel or unsupported

- Request clarification or evidence when confidence is low

This approach moves away from blind trust in a single end-to-end model and toward AI systems with checks and balances.

3. More Transparent UX and Human Oversight

On the user side, design choices matter. Interfaces can:

- Display certainty estimates or confidence bands

- Encourage users to verify critical information

- Clearly mark content as AI-generated and provisional

For high-stakes uses, human experts must remain in the loop. An LLM should be a tool that assists professionals, not an autonomous decision-maker.

Looking Ahead: AI That Knows What It Doesn’t Know

If we want AI systems that do not fall into “delusions,” we need a shift in how they are built. Purely statistical text prediction is not enough. Future systems will likely need:

- Structured world models that represent entities, relationships, and causal structure

- Grounding in sensory data, experiments, or external knowledge bases

- Meta-cognition—the ability to estimate their own uncertainty and refrain from answering when evidence is insufficient

Until then, LLMs should be treated with caution. They can be powerful tools for drafting, brainstorming, and language manipulation, but they are not reliable oracles. Recognizing their propensity for confident falsehoods is essential for safe deployment.

The analogy to “delusion” is not meant to suggest that there is a mind inside these systems. Rather, it is a warning: we have created machines that can mimic reasoning without truly reasoning, that can sound authoritative without being anchored in reality. Understanding that gap—and designing around it—may be the most important challenge in making AI genuinely trustworthy.

< lang="en">

Reference link: https://garymarcus.substack.com/p/chatgpt-and-delusions-an-important

Leave a Reply